This is the big AI risk that no one is talking about - where is the Responsible AI movement?

Taylor Swift deepfakes went viral last week, spreading realistic, sexualized images. While not new, deepfakes are increasingly becoming realistic and easy to create, thanks to advancements in generative Artificial Intelligence (AI). Hyper-realistic AI-generated deepfakes are also becoming political tools – some seemingly bringing dead politicians back to life, like Indonesia’s dead and former President Suharto.

How can we distinguish between AI and humans? Responsible AI movements and regulations in North America and Europe currently prioritize the development of content authentication tools. While necessary, these Responsible AI efforts to date are not sufficient. Developing foolproof ways to differentiate between AI- vs. human-generated content is essential. But even if we achieve this one day, humans would still be left with the more significant challenge of distinguishing whom – or what – to befriend, love, and hate.

Today’s Responsible AI efforts reflect a narrow, short-term understanding of AI and the risks it will pose to human society. In this article, I explain the broader, longer-term “how to distinguish between AI and human” dilemma, the potential costs of failing to prepare for it, and initial recommendations for Responsible AI ethicists and regulators to consider.

Responsible AI’s content authentication efforts are necessary but not sufficient

When ChatGPT 3.5 was launched in late November 2022, an early wave of critiques heralded apocalyptic scenarios in which AI takes over the world and destroys humanity in a few fell swoops. Over the last ~fourteen months, a more measured wave of AI critiques has emerged – Responsible AI. While there is no unified movement, Responsible AI generally refers to developing and deploying AI in line with principles such as fairness, security, privacy, transparency, and reliability.

Emerging AI regulations broadly reflect Responsible AI tenets. In line with the principle of transparency, both the Biden administration’s Executive Order on AI (Executive Order on AI) and the latest draft European Union’s AI Act (E.U. AI Act) explicitly address the need to distinguish between AI- vs. human-generated content. The Executive Order on AI specifies the need for content authentication and watermarking technologies “… to make it easy for Americans to know that the communications they receive from their government are authentic.” The E.U. AI Act specifies the need to detect and label AI-generated content “to enable the public to distinguish AI-generated content effectively.”

Currently, there are no foolproof ways to authenticate whether content was generated by AI vs. humans. Varying levels of progress have been made, depending on the specific domain of AI-generated content. So far, text-based content is proving much more difficult to authenticate than image-based and audio-based content. There are significant concerns that it may be impossible to develop and enforce foolproof ways to authenticate AI- vs. human-generated text-based content.

Authentication is essential to social trust. How do I know you are who you say you are? Before I can begin deciding whether to trust someone’s words and actions, I need to trust that they are who they say they are. We already live in a world where identity theft and impersonation of other humans – before adding AI to the mix – have eroded social trust. The erosion of social trust will only accelerate as AI is increasingly used to create hyperrealistic deepfakes that impersonate either real humans or entirely fictional beings. With upcoming elections worldwide, there is significant cause for concern about the weaponization of generative AI-assisted deepfakes.

But deepfakes are just the tip of the iceberg. Even if one day we were to develop and enforce foolproof content authentication mechanisms successfully, we would still be left with a broader, longer-term problem – how to remain emotionally detached from machines, even when we know that they are machines.

We will become emotionally involved with AI as it becomes 3-D, even if we know it’s a machine

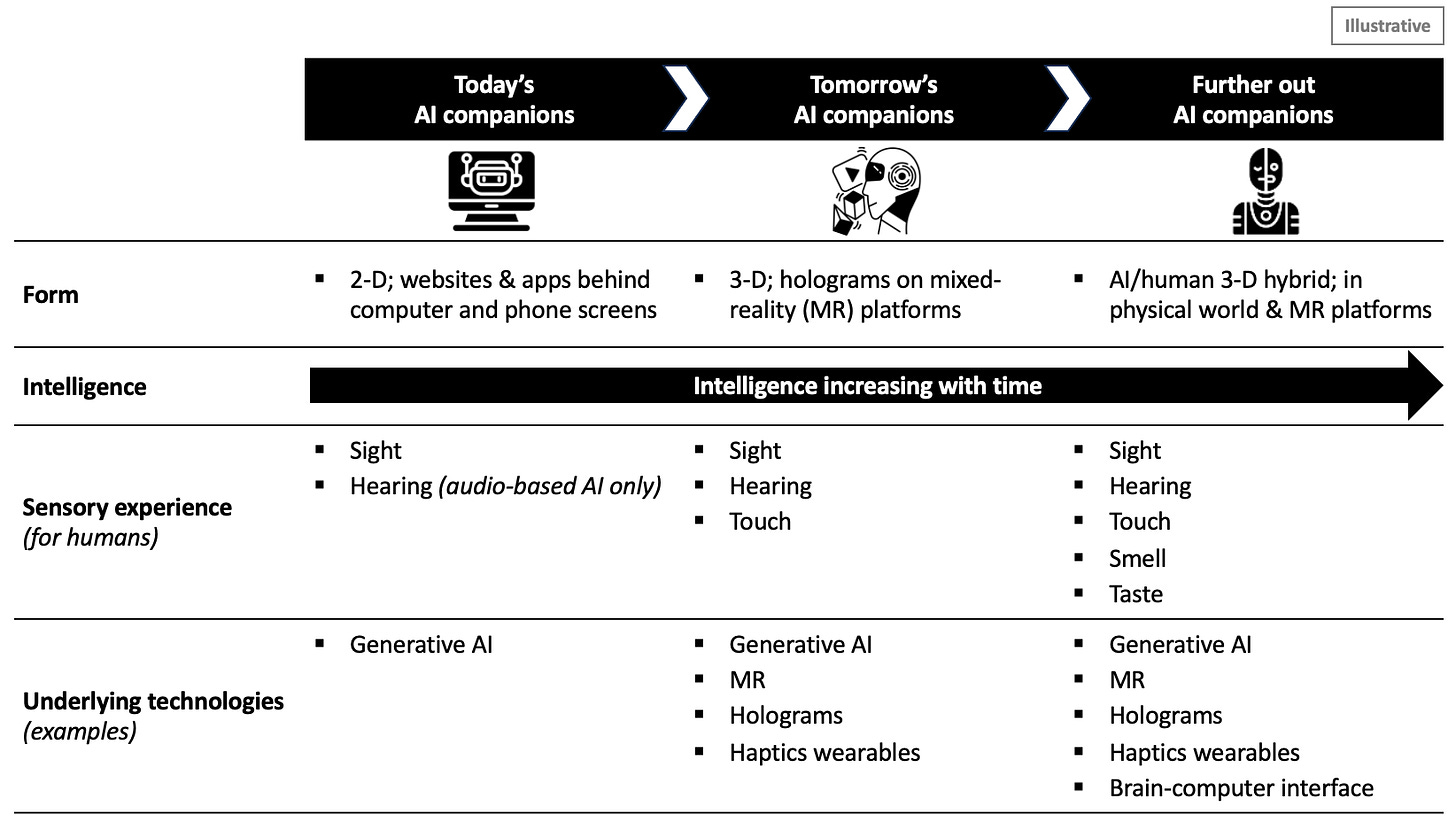

Today, AI is 2-D. Tomorrow, AI will be 3-D.

While the world has been talking nonstop about ChatGPT, significant advancements have been underway in other technologies like Mixed Reality, holograms, and haptics wearables. Mixed Reality (MR) refers to an immersion of physical and digital spaces, where digital experiences are superimposed onto our physical world and vice versa. Holograms are 3-D digital projections that appear in our physical world. Haptics wearables enable us to physically experience simulated, digital sensory experiences (further explanations here).

The convergence of these technologies will give rise to 3-D AI entities that look and feel exactly the same as humans when we interact in hologram form with others – humans and AI – on MR platforms. Haptics wearables will allow us to physically interact with - touch, smell, hear, see, taste - human and AI holograms and our surrounding MR environments.

Figure 1: How our AI experiences will change in shape and form over time

Hologram-based MR experiences have yet to gain mass traction and appeal. But they are coming. Much like it took decades for personal laptops and smartphones to evolve from gigantic computer systems and bulky brick-like phones, immersive MR technologies will also take some time to evolve and eventually gain traction in everyday life. Tomorrow, Apple’s much anticipated Vision Pro, an MR headset, is being released in the U.S. Zoom will concurrently launch its Vision Pro app to enable more immersive Zoom conferencing experiences. Apple and Zoom are not the only companies investing heavily in Mixed Reality technologies – Sony, Samsung, Xreal, and many others have been making headway.

As immersive MR experiences gain everyday traction over time, our interactions with AI will become uncannily similar to interactions with humans. As human-AI interactions become increasingly like human-human interactions – not just in content across voice, text, and image domains but also in shape, body, and form – we will increasingly develop emotional relationships with machines – even when we know we are interacting with machines.

This is already happening with today’s 2-D AI. Many startups are providing adult AI platforms where humans can toggle among character traits and features to build their ideal AI girlfriend. Interest in 2-D girlfriend AI bots has been remarkable over the last ~fourteen months, as evidenced by Figure 2 below. Since the launch of ChatGPT 3.5 on November 30, 2022, many people globally have already been in romantic relationships with AI. AI relationships are not restricted to the adult industry or romantic relationships. In fact, AI relationships can take on literally any form of human relationship imaginable – from children’s personalized tutors and companions to help senior citizens combat loneliness to mental health allies.

As human-AI interactions become increasingly like human-human interactions – not just in content across voice, text, and image domains but also in shape, body, and form – we will increasingly develop emotional relationships with machines – even when we know we are interacting with machines.

If humans are already becoming emotionally entangled with AI in their 2-D form, fully knowing they are dealing with machines, how will humans feel about AI in their 3-D form? Historically, multiple sensory experiences have been more emotionally engaging than single sensory experiences – think about the transitions from radio to television and from silent film to IMAX. Even if we have foolproof ways of knowing that a hologram is machine-based, many of us will not care and relate to it as a human equivalent.

Figure 2: Worldwide interest over time in “ai girlfriend” search term on Google web search, 2004-present

Source: Google Trends

Once we are emotionally involved with AI, we can easily be manipulated.

AI will become a new vector of manipulation. It is well established in the field of psychology that the more emotionally entangled someone is, the easier it is to manipulate – to control to the manipulator’s advantage. Malicious actors will be able to manipulate the emotions, including trust, that human users place in AI companions. From using data poisoning tactics to deepfakes, malicious actors will be able to infiltrate AI systems that humans regard as their friends and lovers, even if we know they are machines – to present misleading information and drive specific user actions.

Emotional manipulation via technology, such as television and social media platforms, has existed for many decades. But technologies previously used to conduct emotional manipulation were clearly just that – technologies. There was no confusion on our part as to whether the technological platform was sentient or non-sentient. With 3-D AI companions, we may become confused about whether they are just technology or our friends. Imagine our friends and loved ones being targeted and exploited to subtly spread misinformation and disinformation in ways that are difficult for us to detect - not only because of technical sophistication but also because “love is blind.” Manipulation via 3-D AI companions we love will be significantly more potent than manipulation via 2-D social media platforms.

Some have dismissed apocalyptic scenarios in which AI machines conspire among themselves and take control over humans by suggesting that we can always pull the power plug – quite literally because these are machines that run on electricity. This idea assumes that there is still a clear emotional divide between humans and machines. Even if some of us could technically pull the plug, we may need to wage war with other humans who vehemently do not want us to because they are emotionally involved with the machine. Social media has much to teach us about the extremes humans will go to when they are emotionally connected and sometimes addicted to technology and cut off from it. Numerous children and teenagers addicted to social media have committed suicide after their concerned parents took away their phones (note, social media has to-date existed only as a 2D platform).

From using data poisoning tactics to deepfakes, malicious actors will be able to infiltrate AI systems that humans regard as their friends and lovers, even if we know they are machines – to present misleading information and drive specific user actions.

The broader, longer-term “how to distinguish between AI and human” dilemma is fundamentally about not confusing non-sentience with sentience. It is about keeping a clear line between what it means to be human vs. machine. This dilemma will only become more complicated with advancements in brain-computer interface technology, which will create AI-human hybrid entities. Brain-computer interface technology aims to implant machines into humans to enable control and execution of physical actions by thinking. Earlier this week, Elon Musk announced the first human Neuralink brain implant. While still more theory than practice, technologies to power mind uploading, which would scan our post-mortem human brains onto the cloud, and to develop general AI, which would be “generally smarter than humans” without domain-specific restriction (e.g., just text- and voice-based) are under development.

Three recommendations to address the broader “how to distinguish between AI and human” dilemma

Assess AI risks in the broader, longer-term context of technological convergence that is blurring the line between machines and humans.

Responsible AI frameworks and regulations today primarily address AI risks based on today’s 2-D version of AI. We need to take a broader view of how various technologies are converging to understand the bigger picture of AI and the risks it could pose. AI is just one piece of the bigger technological picture. To comprehensively address AI risks that are emerging on the horizon, we must consider what AI will look like in the future – and this requires considering the convergence already happening across numerous technologies, including AI, MR, haptics, holograms, and brain-computer interface technology.

Classify as “higher risk” any AI whose implicit or explicit use case is human companionship.

Various regulations recognize that AI use cases can pose differing levels of risk. Currently, “AI systems that directly or indirectly act as human companions” are not explicitly highlighted as high risk use cases in the U.S. or the E.U.1 In the latest draft of the E.U. AI Act, “AI systems that manipulate human behavior to circumvent their free will” are among the banned applications. But this refers to AI systems intentionally built to manipulate human behavior. It does not refer to AI systems that may inadvertently become vectors of manipulation because of how humans feel about them.

I believe any AI system whose implicit or explicit use case is to develop relationships with humans should be considered higher risk. I am not here to say who – or what – other humans should or should not develop emotional relationships with. Rather, when humans form emotional relationships, we should have the right to know who or what we are investing in (hence the need for foolproof authentication technologies). And, if we choose to become emotionally involved with AI, our eyes must be wide open to the fact that malicious actors can exploit vulnerabilities in our AI friends to manipulate us in ways that we may not realize until it is too late.

Recognize that there can never be completely trustworthy AI systems.

So long as humans can be emotionally involved with AI, there can never be completely trustworthy AI systems.

The NIST AI risk management framework states, “For AI systems to be trustworthy, they often need to be responsive to a multiplicity of criteria that are of value to interested parties.” Yet, even if we build AI systems that meet every criterion outlined, if they serve directly or indirectly as human companions, we should not declare such systems as “trustworthy.” There simply can never be “trustworthy” AI systems used for human companionship because we cannot trust ourselves to remain emotionally detached. There will always be uncertainty about how much risk we are dealing with so long as AI can be used as a vector to manipulate our emotions.

Our conception of what AI risk management can do needs to change. There is no end state at which we can declare AI systems as trustworthy because AI risks are not just about AI - they are equally, if not more, about human risks. The most we can do is consciously monitor for and manage risks at all times, forever.

We need to proactively address the risks of falling in love with AI

Today’s Taylor Swift AI deepfakes have no human-like shape or body. So far, we have been interacting with believable deepfakes via websites and apps using our 2-D laptop or mobile screens. Tomorrow’s Taylor Swift AI deepfakes may take on a 3-D, human-like form.

I believe the most significant risks that AI poses to humanity have less to do with AI as technology and more to do with how humans will relate to AI – that we will like, dislike, love, and hate AI. AI does not need to develop superhuman powers to take over the world. All that needs to happen is for us to believe that AI can be worthy of love and hate, as humans are, and confuse machines for humans. With this entrustment of our emotions, our AI companions will become uniquely potent vectors of manipulation. As various technologies converge and give rise to 3-D AI companions, we may no longer be able to clearly distinguish between human vs. machine, between the state of being alive vs. memorialized in AI form, and between life in the physical world vs. in digital realms.

Responsible AI efforts need to consider this risk more explicitly and urgently.

This article was prepared by Jaymin Kim in her personal capacity. The views and opinions expressed in this article are those of the author.

The cover image was created with the assistance of ChatGPT image generator.

The Executive Order on AI requires developers of “the most powerful AI systems [to] share their safety test results and other critical information with the U.S. government,” and for “companies developing any foundation model that poses a serious risk to national security, national economic security, or national public health and safety [to] notify the federal government when training the model, and [to] share the results of all red-team safety tests.” The latest draft of the E.U. AI Act deems as “high-risk” AI technologies used in critical infrastructures, safety components of products, employment, essential services such as credit scoring, law enforcement, migration and border control management, and administration of justice and democratic processes, among others. High-risk AI systems are subject to stricter regulations than systems deemed limited risk and minimal risk.