If you don’t blindly trust humans, don’t blindly trust AI

Artificial Intelligence (AI) has been hitting big headlines daily in 2023. Some headlines reflect fear - that chatbots are lying and spreading propaganda.

Historically, the concept of deceptive chatbots existed only in science fiction.

Today, the line between machines and humans is blurring – fast.

In this article, I explain how this line has been blurring over time and what we can expect in the coming decades.

A brief overview of AI and Generative AI

Artificial Intelligence (AI) broadly refers to computational systems that mimic human intelligence - hence “artificial” intelligence. AI systems can be categorized as either:

Narrow AI: AI systems designed to perform a specific task, simulating human intelligence limited to a specific domain. All AI systems developed to date fall into this category.

General AI: Hypothetical AI systems designed to perform any task, generally simulating human intelligence without restriction to a specific domain. General AI does not exist today. Companies like OpenAI aim to develop General AI, “systems that are generally smarter than humans.”

Recent headlines have been referring to Generative AI models, such as ChatGPT, Bard, and DALL-E, which are instances of Narrow AI. These models are versatile and creative in generating new content, but they can do so only in limited, specific domains (e.g., ChatGPT is limited to natural language text, and DALL-E is limited to images, etc.).

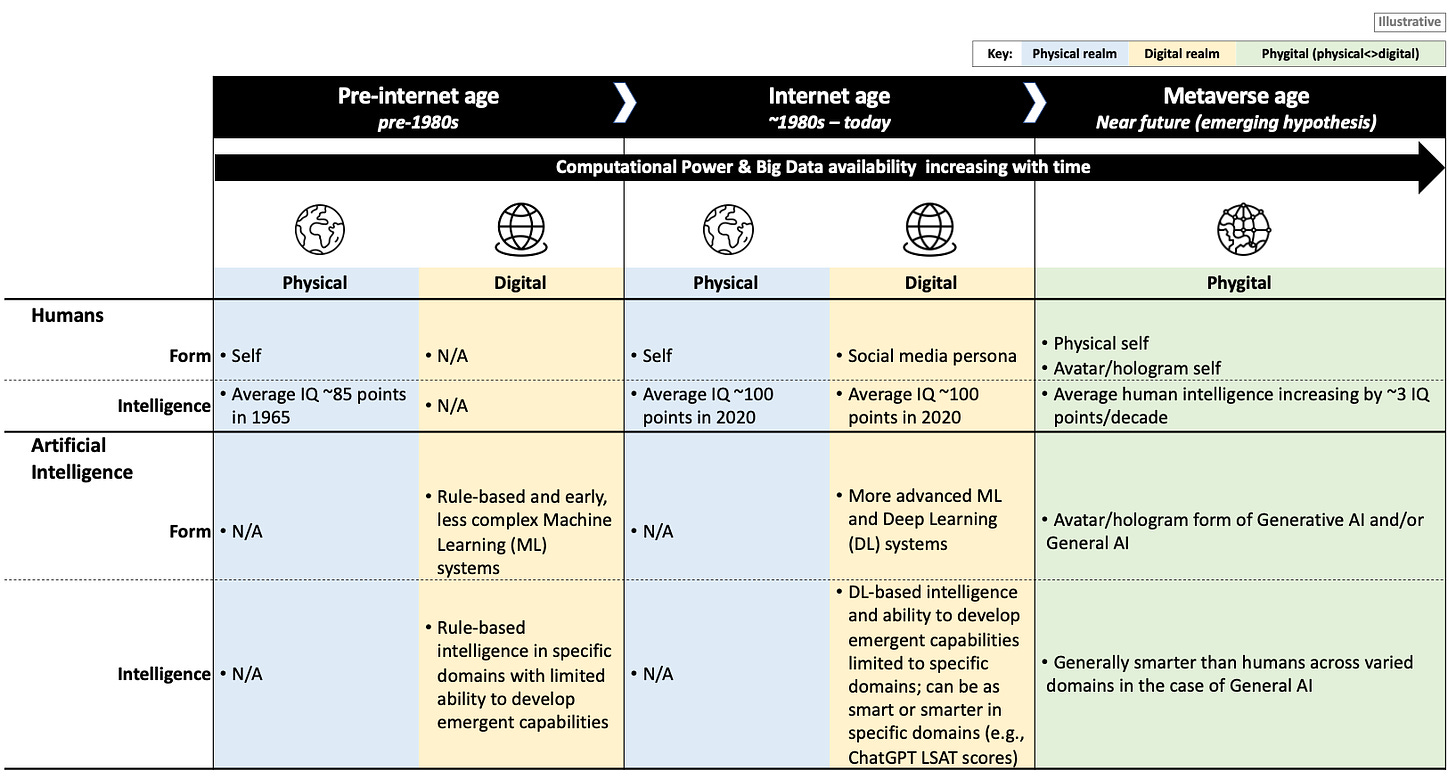

Figure 1: How the line between humans and machines is blurring over time

Generative AI models are not new - the concept can be traced back to the 1950s. However, earlier versions were significantly less complex due to a lack of available computational power and data and less sophisticated machine learning techniques. The latest Generative AI models, such as ChatGPT and DALL-E, represent a marked improvement in the diversity and believability of generated outputs, enabled by the increased availability of computational resources and volumes of training data and advancements in deep learning techniques.

Why we are disappointed when today’s Generative AI models “lie”

The believability of the outputs generated by today’s latest Generative AI models is causing some confusion about what these models are – more human or more machine. ChatGPT can respond to our questions about practically any subject we can think of and responds each time with seemingly original, complex, and convincing answers that, historically, we only expected from other humans. This is an important point – for the first time in history, the average retail consumer is interacting with machines that are seemingly human-like. It becomes easy to believe that we may be interacting with another sentient being who responds authoritatively on practically any topic we ask about.

“For the first time in history, the average retail consumer is interacting with machines that are seemingly human-like.”

Sometimes, Generative AI systems spit out nonsensical, erroneous outputs that cannot be explained based on their training data or other inputs. They are said to have “hallucinated” (or “confabulated”) and are often what headlines point to when accusing Generative AI systems of lying. Hallucinations tend to occur either when Generative AI systems encounter a scenario outside of their training data or when they try to infer patterns when there are none. We often do not realize that a Generative AI system has hallucinated unless we fact-check – after which we feel “lied” to.

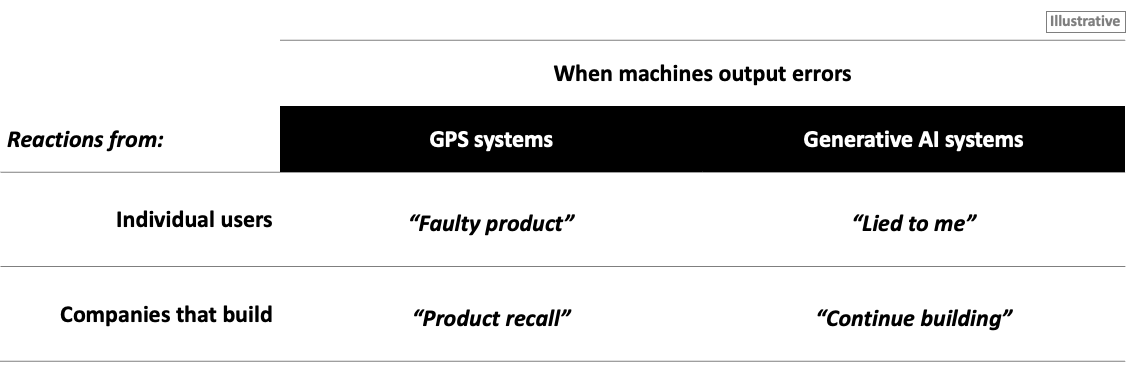

Figure 2: Our different reactions to errors made by GPS systems vs. Generative AI systems

Historically, when machines produced errors, we did not feel “lied” to - when a GPS system fails to give us accurate and reliable directions, we tend to say that it is faulty and ask for a refund. And companies do product recalls when many GPS systems from the same product line fail to deliver accurately.

Today, when Generative AI systems produce errors, we feel “lied” to because we are simultaneously attributing Generative AI systems with both machine-like and human-like characteristics. And, companies building advanced Generative AI models like ChatGPT are not doing product recalls (at least, not yet, despite calls to pause development) when their models generate inexplicable outputs and make numerous newsworthy errors. Today’s advanced Generative AI models lack complete explainability – their outputs cannot always be explained or predicted based on their inputs and design. Depending on who you ask, this is a feature, not a bug. Generative AI models mark a milestone in the journey toward developing General AI systems, which would theoretically be able to learn new capabilities in new domains that humans did not originally program them for. To learn net new capabilities in new domains, AI systems, by definition, must lack complete explainability.

“Today’s advanced Generative AI models lack complete explainability – their outputs cannot always be explained or predicted based on their inputs and design. Depending on who you ask, this is a feature, not a bug.”

Models like ChatGPT already have (limited) emergent capabilities, which are capabilities that the AI models were not intentionally programmed with. Sometimes, these emergent capabilities can be positive – e.g., translating text into multiple languages despite not being explicitly trained to do so. Other times, they can be negative – e.g., making up believable responses that are factually incorrect.

Our reactions to errors from GPS systems vs. Generative AI systems today differ significantly because we have different expectations from these machines. We expect GPS systems to be machines, period. We expect Generative AI systems to possess error-free characteristics associated with machines that come with product warranties as well as the ability to intentionally deceive – a human characteristic.

“Today, when Generative AI systems produce errors, we feel “lied” to because we are simultaneously attributing Generative AI systems with both machine-like and human-like characteristics.”

Holograms powered by General AI on Mixed Reality platforms

Today’s ChatGPT has no human-like face or body - despite its human-like, believable responses. We interact with ChatGPT via websites and apps using our two-dimensional laptop or mobile screens.

Tomorrow’s ChatGPT may take on a human-like form. The convergence of various existing and new technologies, including holograms, Mixed Reality (MR), and Generative and/or General AI, will further blend the line between machines and humans (holograms and MR technologies explained here). We have already seen the rise of photorealistic avatars — three-dimensional digital versions of our physical selves that appear on two-dimensional screens — and hologram technology — three-dimensional digital projections that appear in our physical world. Mixed Reality (MR) refers to an immersion of physical and digital realms, where digital experiences may be superimposed into our physical world, and physical experiences may be superimposed into our digital world. Some refer to work, life, and play in Mixed Reality broadly as the “Metaverse” – an emerging reality where our “physical” and “digital” experiences are becoming seamlessly immersed – “phygital.”

“The convergence of various existing and new technologies, including holograms, Mixed Reality, and Generative and/or General AI, will further blend the line between machines and humans.”

In the coming decade or two, we may be putting on MR glasses to interact with holograms of our human colleagues and friends and holograms of AI bots with three-dimensional faces and bodies. Today many people are already in relationships with AI chatbots in their two-dimensional screen form.

The emergence of AI-powered holograms will force humans to question what it means to be human and raise new challenges for numerous fields, from ethics to cybersecurity. For example, what does it mean to be in a relationship – should we discriminate between human vs. AI relationships? How do we prove if we are human vs. AI? Identity fraud has always been and will continue to be an issue among humans, but in the coming decades, we may be confronting identity fraud among humans and AI bots. Imagine humans pretending to be AI bots, AI bots pretending to be humans, and everything in between.

Already, the line between AI and human agency is blurring. For example, in ongoing copyright infringement lawsuits related to Generative AI, a key point of contention is whether Generative AI-derived outputs can be considered human creations (as a result of human prompts) or are instead machine creations.

The human responsibility to verify AI outputs

We should pause and reflect on what Generative AI systems are. Generative AI systems are designed and built by humans to mimic specific aspects of human intelligence. For example, the deep neural networks underlying today’s latest Generative AI systems were inspired by the complex, interconnected networks of neurons in human brains.

Today’s Generative AI systems are always prone to human error in two ways:

Fallible humans are designing and developing these systems

When a user prompts ChatGPT with a question, and ChatGPT returns a factually incorrect answer, the onus is on the human user to verify the validity of ChatGPT’s answers before believing it word-for-word. The need to verify what machines recommend is not a new concept. When GPS systems erroneously tell us to drive off what appears to be a cliff, most people implicitly know to override the recommendation and do the opposite. We should not be fooled by the authoritative, human-like responses of Generative AI models – they are ultimately machines made by humans to seem like humans.

Human intelligence itself, which these systems mimic, is fallible

To me, AI hallucinations seem very human – people do not always say “I don’t know” when they factually do not know, and people sometimes perceive patterns even when there are none (this is known as pareidolia - e.g., seeing shapes in clouds, overfitting in statistics, etc.). Generative AI systems’ emergent capabilities – their unpredictability and lack of complete explainability – are what make them seemingly human. Do we blindly trust humans to tell the truth and be error-free? If our answer is no, then how could we blindly trust ChatGPT, which mimics specific aspects of humans, always to tell the truth and be free from error?

Prerequisites to human verification of AI outputs

At a minimum, we need to know when we are interacting with humans vs. AI and when we are reviewing outputs created with partial or complete AI assistance. As technologies like AI, holograms, and MR converge, it will become increasingly challenging to tell who we are interacting with – humans or AI – and what we are looking at – human vs. AI creations.

Today we have the luxury of having clarity that when we interact with ChatGPT, we interact with an AI chatbot behind a two-dimensional screen. This luxury will not last. It is already becoming difficult to tell between human vs. AI creations, with AI-generated images winning photography awards. Laws mandating disclosure of identity and creation methods may be a prerequisite to ensuring that humans can discern between themselves and machines in the coming decades.

“Do we blindly trust humans to tell the truth and be error-free? If our answer is no, then how could we blindly trust ChatGPT, which mimics specific aspects of humans, always to tell the truth and be free from error?”

If you don’t blindly trust humans, don’t blindly trust AI

As we make further advancements in the field of AI, we should innately learn the need to verify before believing. The concept of ChatGPT “lying” to us should not be a surprise. If AI systems seem more machine-like, we should verify what they say. If they seem more human-like, we should still verify what they say. Ironically, as AI chatbots take on three-dimensional hologram forms and it becomes increasingly difficult to verify who is human vs. AI, we may become less trusting of each other and AI.

This article was prepared by Jaymin Kim in her personal capacity. The views and opinions expressed in this article are those of the author.

The cover image was created with the assistance of DALL·E 2.